What teams can usually answer

- Which bugs are open

- Which incidents happened

- Which issues feel painful right now

Evidence-backed bug analysis pilot

Analyze tickets and linked code changes to reveal recurring failure modes, systemic patterns, and code hotspots - without prescribing what to do next.

For teams that know recurring bug pain is real, but need evidence before committing to quality investment.

The problem

Recurring causes live across tickets, merge requests, diffs, and memory, making it hard to build a shared view.

When no one can explain why bugs cluster, quality investment loses to short-term delivery pressure.

What Ticket Triage does

Not another dashboard. A focused analysis that makes bug history usable for decisions.

Bring together bug tickets, linked merge requests, and diffs so analysis starts from real engineering history.

Expose failure modes, RCCF categories, and code areas that repeatedly show up in bug-fix work.

Provide evidence-backed leverage areas and suggested directions so teams decide what to do next.

How it works

Keep the scope tight, keep the inputs real, and end with an evidence-backed analysis.

Three steps from historical bug evidence to a decision-ready readout.

We define the dataset together and connect your GitLab tickets, merge requests, and diffs.

The analysis surfaces recurring failure modes, systemic patterns, and hotspot areas driving repeated fix effort.

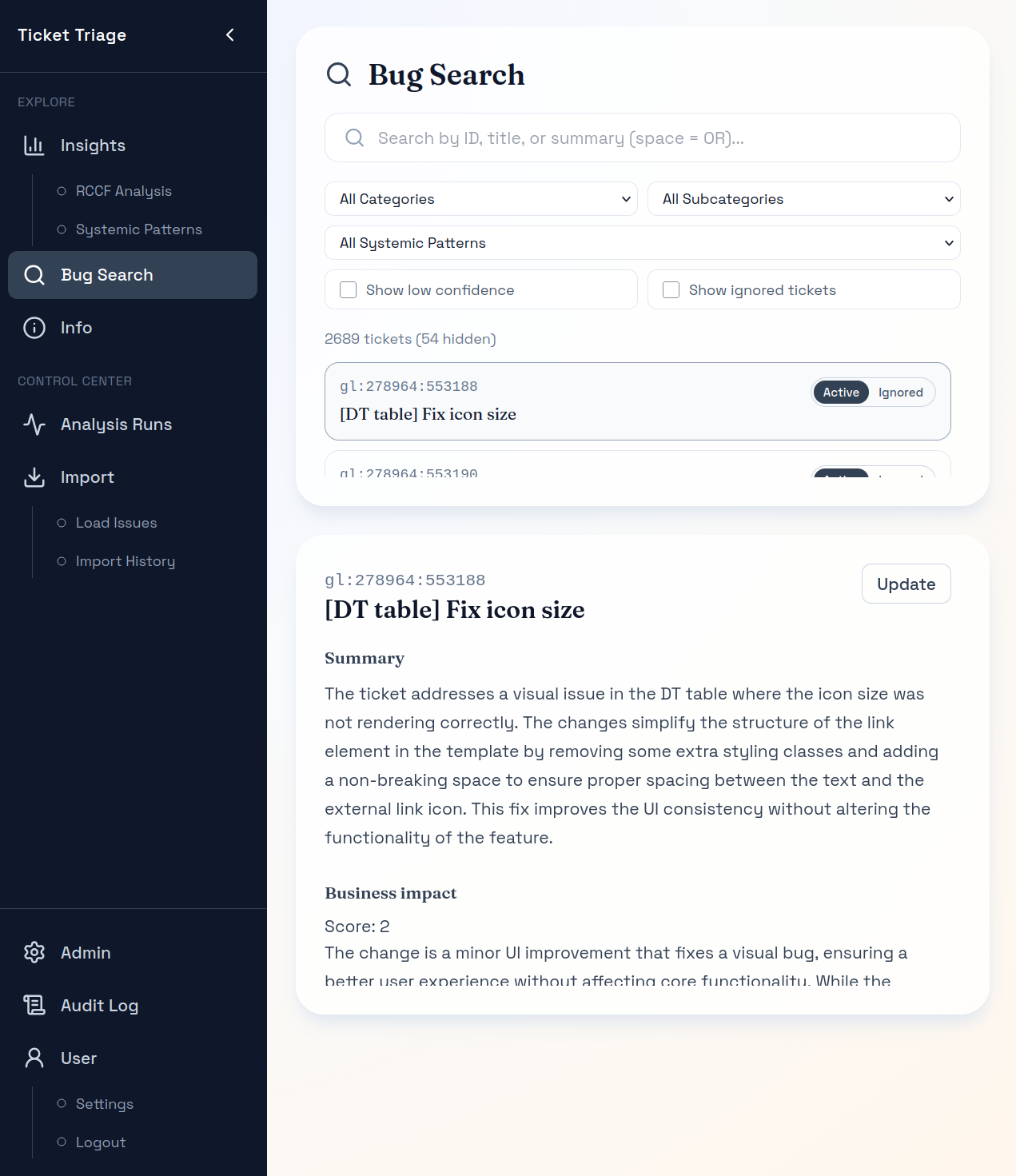

You can explore the outputs, understand the patterns, and trace every insight back to the underlying tickets and diffs.

What you get

Engineering leaders can review, challenge, and use the results to focus their own plans.

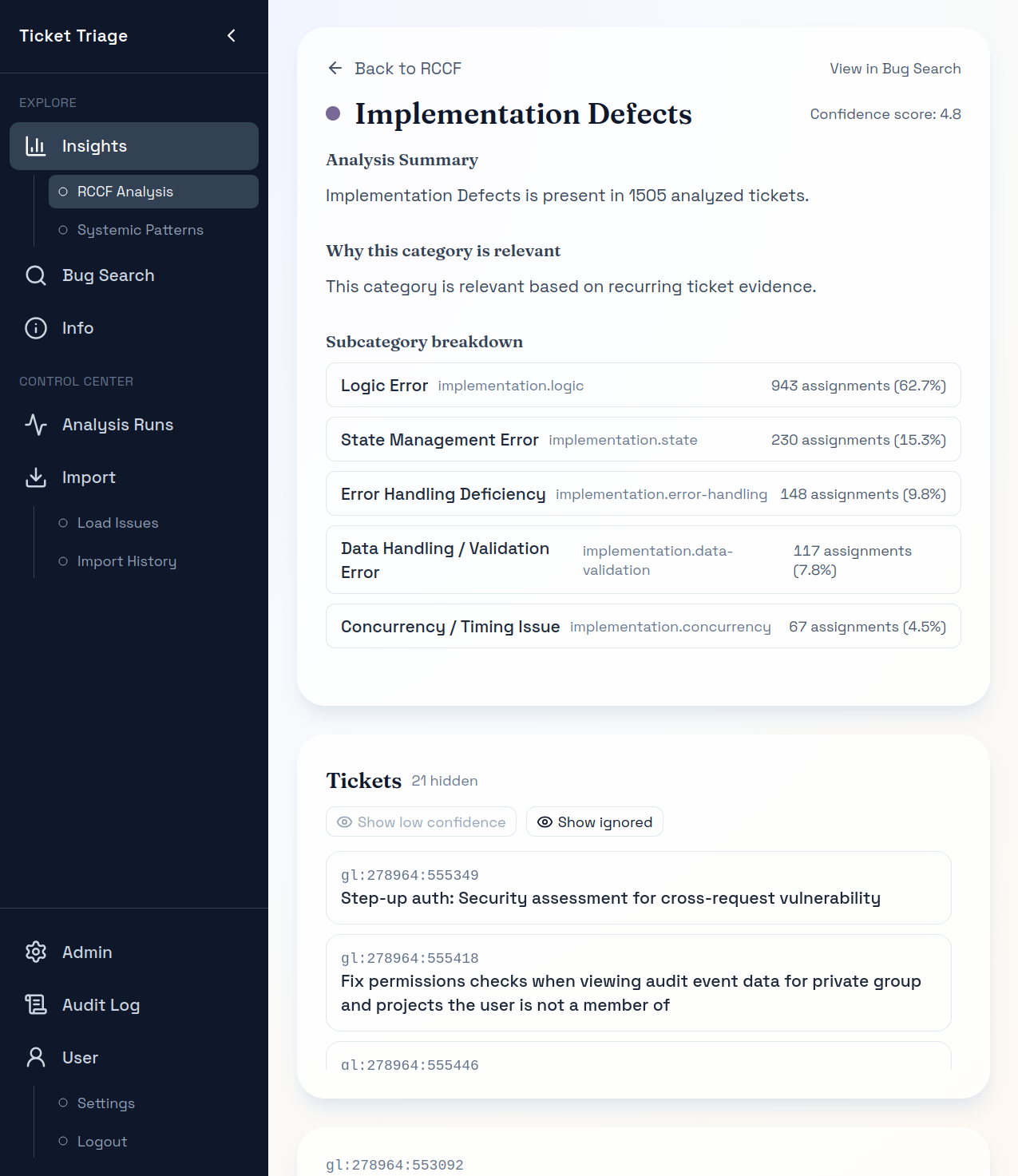

See which RCCF categories dominate your history and where leverage is likely to be highest.

Spot cross-cutting issues that show up across tickets, teams, or parts of the stack.

Highlight files and areas that repeatedly absorb bug-fix effort.

All insights bundled together – including in-product PDF export.

Proof

Examples of the evidence layer teams can inspect, challenge, and use.

Recurring patterns

Understand what drives bug-fix effort so planning starts from evidence, not opinion.

Traceability

Review the reasoning, subpatterns, and linked tickets behind each pattern.

Grounding

Open individual tickets to confirm the analysis reflects real context.

Why teams trust it

Pilot analysis

The pilot is scoped to validate fit quickly, produce a useful artifact, and keep commitment risk low.

Best fit

Included in the pilot

Pilot scope: Single system or team, GitLab issues, one code host, single analysis run, ~2-4 weeks.

Pilot price: EUR 18,000 total (EUR 12,000 setup and baseline, EUR 6,000 pilot platform and support).

Trust model: Runs in your environment, with your own OpenAI access (BYOK).

Additional connectors: Integrations beyond the default setup: alternatives to OpenAI or GitLab, scoped individually, typically EUR 4,000-EUR 12,000.

FAQ

Those tools help with monitoring, rule-based issues, or code-level signals. Ticket Triage focuses on historical bug reality: recurring failure modes, linked fixes, systemic patterns, and leverage areas backed by evidence.

For the current offer, yes. The pilot is designed around GitLab bug tickets and OpenAI. Other setups are possible, depending on scope and via additional integrations.

You get a recurring failure-mode view, systemic pattern summary, hotspot concentration view, and a decision-ready readout with an evidence layer, including in-product PDF export.

The software powers the analysis, and during the pilot you get read-only access to explore the results - not just a static report. The engagement is a productized, single-scope pilot before any broader rollout.

The pilot is intentionally scoped: one system or team, one ticketing source (GitLab), one code host, and an agreed historical window.

The model is customer-hosted with BYOK. Outputs stay reviewable and traceable back to real tickets and diffs.

No. We show where leverage concentrates and provide suggested directions, but teams decide the actions.

Ready to focus on leverage?

Start with one team or system, review recurring patterns in real tickets and diffs, and leave with a shared evidence base for planning.